Executive summary

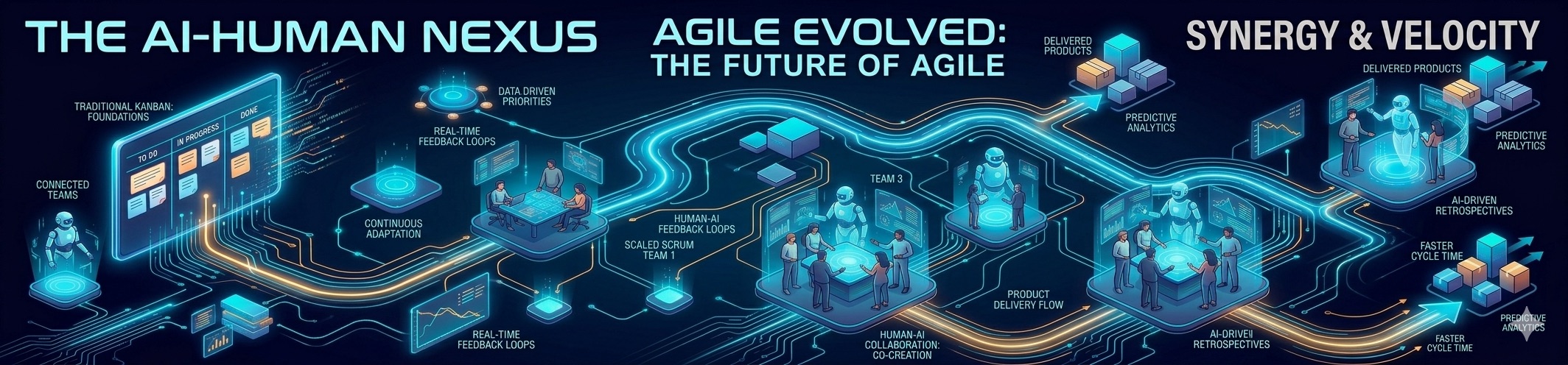

Agile isn’t dying. It’s molting. The next few years will reward people who treat Agile as an operating system for value delivery—not as a “process you follow because the PMO said so.” Recent evidence points to three big forces converging: (1) AI is everywhere, but it’s not magic, and it can even hurt delivery performance if the fundamentals are weak; (2) platform engineering and internal developer platforms are becoming the “new scaling,” because developer experience is now a measurable constraint; and (3) product-centric operating models + value stream management are pulling Agile upward from teams into portfolios, governance, and funding.

A key nuance: while the core Scrum definition is intentionally minimal and stable, the ecosystem around it is expanding through adjunct guidance (flow/Kanban, scaling patterns, evidence-based management, product operating models). That’s where most “modern Agile” change actually happens.

From a 3–5 year outlook (from now, March 2026), the most probable “shape” of the field is:

- AI augmentation becomes baseline for analysis, documentation, testing support, and day-to-day delivery work—but organizations learn (sometimes the hard way) to pair it with quality discipline, small batch sizes, and strong engineering practices to avoid performance drops.

- Platform engineering expands beyond big tech into mainstream enterprises, with “platform as a product” thinking and self-service (“ticket ops” → paved roads/golden paths).

- Hybrid ways of working stabilize: not “everyone remote forever,” but more intentional meeting design, stronger async habits, and explicit social/learning mechanisms to preserve collaboration and psychological safety.

- Metrics mature from output theater (velocity, utilization) toward outcome-, flow-, and reliability-aware measurement (DORA + EBM + OKRs + flow metrics)—with heavier emphasis on avoiding Goodhart’s Law traps.

Practical implication: the future belongs to practitioners who can connect team-level empiricism (Scrum), flow and reliability (Kanban/DORA), and organizational decision systems (product operating model, governance, compliance, value streams). Or, said more sarcastically: the future belongs to the people who can explain Agile to Finance without using the word “ceremony.”

Assumptions, scope, and what “modern Agile” means here

Assumption about voice/style (explicitly requested): you’re a Polish practitioner writing in English, so this article uses a professional tone with conversational phrasing and mild opinions. Where I speculate (3–5 year projections), I label it as a projection, not a fact.

Scope: modern trends in Scrum/Agile across teams and enterprises, with attention to delivery systems (DevOps/platform engineering), measurement, governance & compliance, and people factors (autonomy, psychological safety), using sources from roughly 2020–2026 where possible.

What “modern Agile” means in practice:

- Still anchored in Agile values and principles, especially early and continuous delivery and adaptation to change.

- But increasingly executed via a blended system: Scrum + flow/Kanban, plus scaling patterns, plus product discovery/delivery loops, plus DevSecOps and compliance automation.

What’s evolving in Scrum and adjacent practices

Scrum’s official definition emphasizes that it is purposefully incomplete—only the minimum structure required to implement empiricism and lean thinking, leaving practices and tactics to context. That’s why modern Scrum “trends” often look like either (a) changes in how people interpret the minimum, or (b) the rise of official-ish adjunct guides that help teams deal with flow, scaling, and product/portfolio realities without rewriting Scrum itself.

The most influential recent Scrum shift is still the 2020 update

The 2020 revision deliberately pushed Scrum back toward a minimally sufficient framework (less prescriptive language), emphasized one Scrum Team focused on one product, introduced Product Goal, and clarified the “commitments” linked to each artifact (Product Goal, Sprint Goal, Definition of Done). It also shifted language from “self-organizing” to self-managing.

Why it matters in 2026+: this language subtly supports modern trends like team autonomy, product focus, and outcome thinking—without turning Scrum into a heavyweight methodology. If your organization is still running “Scrum with a separate dev team and a PO on the outside,” you’re not following the direction the last major Scrum revision tried to clarify.

Flow enters “Scrum reality” through the Kanban Guide for Scrum Teams

The Kanban Guide for Scrum Teams explicitly frames Kanban-in-Scrum as improving flow through the feedback loop, and it defines basic flow metrics teams should track (WIP, Cycle Time, Work Item Age, Throughput), plus it connects flow management to Little’s Law.

This is one of the clearest signals of where Scrum in practice is heading: less obsession with timeboxing as a religion, more focus on reducing cycle time, managing WIP, and increasing predictability while keeping empiricism.

Scaling isn’t “one true framework”—it’s a portfolio of tradeoffs

Scaling guidance increasingly reads like: “keep Scrum’s core; add the minimum glue for multi-team integration.” That principle is explicit in Nexus: it extends Scrum “only where absolutely necessary” to manage dependencies and integration across multiple teams working on one product.

At the same time, the market reality (especially in larger enterprises) is hybrid: organizations combine frameworks and delivery approaches. Digital.ai’s 17th State of Agile Report explicitly notes substantial hybrid-model usage (including Agile and DevOps mixes).

Enterprise-scale trends reshaping Agile delivery

Scaling frameworks are converging on the same hard problems

Different scaling approaches emphasize different levers (structure vs. minimalism vs. governance), but they’re all wrestling with the same issues: dependencies, integration, portfolio prioritization, and consistency of delivery.

Below is a pragmatic comparison that reflects what the official descriptions emphasize (and what typically goes wrong).

| Approach | What it fundamentally optimizes | Typical “good fit” | Strengths | Real risks / common failure modes |

|---|---|---|---|---|

| SAFe (by Scaled Agile, Inc.) | Alignment and governance from team → program → portfolio | Very large orgs needing structure, portfolio framing, and common language | Explicit portfolio/leadership constructs; strong emphasis on value streams and business agility themes | Heavyweight adoption can turn into bureaucracy; “doing SAFe” without engineering excellence becomes expensive theater |

| LeSS (Large-Scale Scrum) | Keeping Scrum “Scrum-like” at scale (one product, one backlog, one sprint) | Multi-team product development where simplification is culturally possible | Strong product focus (single backlog, single PO, common DoD); forces organizational design change | Requires deep org redesign; if you try to bolt it onto functional silos, it will fight back |

| Nexus (from Scrum.org ecosystem) | Integration and dependency management across 3–9 teams | Multiple Scrum teams on one product where integration pain is the bottleneck | “Minimum necessary” extension; keeps Scrum core intent; makes integration explicit | If architecture and CI/CD are weak, Nexus becomes a meeting factory rather than an integration solution |

| Scrum@Scale (by Scrum Inc. / Jeff Sutherland) | Coordination through “minimum viable bureaucracy” and scale-free structure | Orgs wanting a network-of-teams pattern with explicit scaling cycles | Designed as lightweight “scale-free architecture”; explicit focus on avoiding bureaucracy | Can be misunderstood as “Scrum of Scrums everywhere”; without clear product strategy, coordination can outgrow purpose |

Notes on sources: SAFe 6.0 and its themes/positioning are described on the official SAFe site. LeSS’ “one product, one backlog” emphasis and scaling limits are described on the official LeSS site. Nexus purpose/definition and “extend only where necessary” positioning are described by Scrum.org. Scrum@Scale’s “minimum viable bureaucracy” and intent are described in the official guide.

DevOps and metrics are standardizing around outcomes, not rituals

DORA’s metrics (now presented as five software delivery performance metrics grouped into throughput and instability) are described as a way to measure software delivery outcomes, and DORA explicitly warns about pitfalls like turning metrics into goals (Goodhart’s Law) or comparing disparate systems.

That matters for Agile because it pushes organizations toward a healthier question:

“Are we reliably delivering value faster?” instead of “Did we do the meetings?”

Platform engineering is becoming the new “enterprise agility lever”

The 2024 DORA report highlights platform engineering’s promise and challenges, including the idea that internal development platforms can increase productivity, that they’re more common in larger firms, and that performance can dip temporarily while the platform matures.

Meanwhile, the Cloud Native Computing Foundation defines platform engineering as building and maintaining self-service software development platforms that improve developer experience and optimize delivery—explicitly contrasting self-service with “ticket ops.” In other words: Agile at scale increasingly depends on whether teams can ship without begging for infrastructure in a Jira ticket.

A tangible example is Backstage (a developer portal framework): CNCF notes its acceptance timeline (Sandbox → Incubating) and positions it as an open framework for developer portals. Backstage’s own documentation explains the “software catalog + templates + docs-as-code” model as a way to standardize without killing autonomy.

AI augmentation: productivity up, delivery performance not guaranteed

This is the most important “modern trend” to treat like a grown-up:

- DORA’s 2024 highlights report that more than 75% of respondents rely on AI for at least one daily professional responsibility, and it reports productivity improvements for many developers.

- But it also reports a critical finding: increased AI adoption may correlate with decreased delivery throughput and stability, and it flags low trust in AI-generated code among a large portion of respondents.

Independent research corroborates a nuanced picture: controlled experiments and field experiments show significant productivity improvements from AI pair programming (e.g., faster task completion and increased output).

The modern Agile takeaway is not “buy AI, become elite.” It’s: AI amplifies your system. If your system has strong small-batch delivery, testing discipline, and clear quality bars, AI can help. If not, AI can help you ship bugs… faster.

Remote/hybrid is no longer “an exception,” but it requires design

Industry and research show remote/hybrid has real impacts on communication, social interaction, and meeting dynamics—often more than on tools and technical practices.

- A mixed-methods study on Agile software development one year into the COVID-19 pandemic reports impacts especially on communication and social interaction, and it documents data collection via a questionnaire and interviews across multiple companies.

- A case study in a large-scale agile environment at Ericsson reports that meeting effectiveness in hybrid work depends on meeting intent: brainstorming/discussion-oriented meetings benefit from onsite attendance, while large information-sharing meetings work well remotely.

- A multi-method study on Scrum projects working from home concludes that working from home and Scrum both contribute to project success, and it emphasizes supporting autonomy/competence/relatedness needs in home working environments.

So, in “modern Agile,” facilitation skill is increasingly about communication architecture: explicit decision logs, async discovery/delivery touchpoints, intentional onsite moments, and psychologically safe participation norms.

Product-centric organizations and continuous discovery are pulling Agile upstream

The Agile Product Operating Model (APOM) explicitly frames a product operating model as a combination of modern product management, agile delivery, and Evidence-Based Management, and it breaks the model into Strategy, People, Structure (rules/tools/governance), and Value Cycle (discovery, delivery, operations).

This is a major trend: Agile is increasingly judged not by “team agility” but by the organization’s ability to continuously deliver value, adapt faster, and act under uncertainty.

On the measurement side, Evidence-Based Management defines itself as decision-making via experimentation and feedback—explicitly positioning goals and evidence loops as essential under uncertainty.

Outlook for the next five years

This section mixes evidence-based signals (2020–2026) with explicit projections. The goal is not fortune-telling; it’s to help you place bets. (Because yes, Agile now includes strategy bets, not only backlog items.)

What is very likely

AI becomes “standard tooling,” not a special initiative. DORA’s reported prevalence of daily AI use is already high, and the direction is clear: AI is now embedded in normal software work (writing, summarizing, explaining).

Projection: Over the next five years, most teams will treat AI assistance as normal—similar to how static analysis and CI became “just part of work.” The differentiator will be governance: trust calibration, evaluation, and workflow design that protects delivery performance.

Platform engineering moves from “cool” to “necessary.” DORA’s focus on platform engineering and CNCF’s push for platform-as-a-product maturity suggests mainstreaming.

Projection: By the end of the period, mid-to-large organizations will increasingly expect some form of internal developer platform and self-service golden paths; teams without it will be at a competitive disadvantage in cycle time and cognitive load.

Hybrid work stays—more intentionally. Both academic evidence and industry framing point toward hybrid as a sustained mode, with emphasis on meeting intent and social mechanisms.

Projection: The winners will treat hybrid like a system design problem: interaction patterns, meeting purpose, documentation discipline, and onboarding playbooks.

What is likely, but uneven

Scaling shifts from “framework wars” to “flow + product + governance integration.” SAFe explicitly emphasizes value streams, flow, and business agility themes, while product operating models and VSM vocabulary are spreading.

Projection: Less focus on picking one framework; more focus on stitching together: product strategy, funding/portfolio decisions, delivery flow, and reliability. Different industries will land differently (regulated vs. consumer tech), but the direction is the same.

Metrics mature—or they get weaponized. DORA explicitly warns about setting metrics as goals and making misleading comparisons.

Projection: Organizations will either (a) learn to use DORA/flow/OKR/EBM measures as learning tools, or (b) rebuild the same old command-and-control behavior with new dashboards. The latter will be common… because humans.

Mermaid timeline of adoption

Practical playbook for practitioners and managers

Recommended actions by role

For Scrum Masters and Agile Coaches: your job is increasingly “system stewardship,” not meeting facilitation.

Treat these as a baseline modernization backlog:

- Re-anchor on Product Goal and transparency: make Product Goal and Sprint Goal visible, and treat commitments as non-negotiable clarity tools (not paperwork).

- Add flow metrics without killing empiricism: track WIP, cycle time, work item age, throughput; use it to run experiments and reduce bottlenecks rather than to police people.

- Make psychological safety operational: create explicit norms for dissent, asking questions, and surfacing risks. Google’s team effectiveness research highlights psychological safety as the top dynamic; Edmondson defines it as a shared belief the team is safe for interpersonal risk-taking.

- Hybrid facilitation becomes a technical skill: apply meeting-intent rules (brainstorming onsite, info-sharing remote), and build async habits (decision logs, lightweight docs).

For Product Owners / Product Managers: “delivery” without discovery is just a faster way to build the wrong thing.

- Implement a continuous discovery cadence (weekly touchpoints, small research activities) and connect it to outcomes rather than features.

- Use OKRs carefully: keep objectives few, key results outcome-based, and avoid turning OKRs into a team task list.

For Engineering Managers / Heads of Engineering:

- Invest in platform engineering as a product: internal customers, paved roads, and measurable DevEx improvements—expect a temporary dip during implementation, because reality has loading time.

- Use DORA metrics as learning signals: measure at the service/application level, avoid cross-context comparisons, and don’t convert them into quotas.

- AI governance is now part of engineering management: define what “acceptable AI usage” means for code, tests, and docs; build review/testing guardrails to prevent the “we shipped faster, and now we’re faster at incident response” lifestyle.

For executives and portfolio leaders:

- Shift funding and prioritization toward value streams and product strategy, not temporary project teams that dissolve the moment learning starts. (Yes, I know… budgeting.)

- Adopt an evidence-based management loop: goals → hypotheses → experiments → measures → adaptation.

Tooling and practices map

This table is intentionally “category-first,” because vendors change faster than Agile buzzwords.

| Need | Practices that matter | Typical tool categories (examples) | Pros | Watch-outs |

|---|---|---|---|---|

| Work visualization & planning | Transparency, slicing work, dependency visibility | Agile planning tools; portfolio planning tools | Helps coordination, shared visibility | Easy to become process theater if output metrics dominate |

| Flow management | WIP limits, cycle-time focus, bottleneck experiments | Kanban analytics / flow dashboards | Improves predictability and throughput | Misused as individual performance tracking |

| Delivery performance | Small batches, CI/CD discipline, reliability practices | CI/CD platforms; change management automation | Direct impact on lead time and stability | Measuring without improving wastes time; DORA warns about over-integration effort |

| Platform engineering / DevEx | Self-service, platform as product, golden paths | Internal developer platforms; developer portals (e.g., Backstage) | Reduces cognitive load, standardizes safely | Platform becomes another silo if not product-managed |

| AI augmentation | AI usage guidelines, human-in-the-loop review | AI coding assistants; AI knowledge tools | Productivity gains shown in studies | Can reduce delivery performance and trust if fundamentals are weak |

| Outcomes & alignment | OKRs, EBM goal levels, evidence loops | OKR tools; product analytics | Aligns work to impact | OKRs become a checklist; EBM warns about outputs vs outcomes |

| Governance & compliance | DevSecOps, policy-as-code, continuous monitoring | Security scanning, SBOM tooling, policy engines | Faster compliance feedback, less late-stage friction | Requires real automation maturity; not just “add another approval” |

Risks and challenges to plan for

A few modern risks are predictable enough to call out:

AI “productivity mirage.” DORA reports productivity and quality improvements in some areas, but also a negative association with delivery throughput/stability and low trust in AI-generated code. The risk is optimizing local productivity while degrading system outcomes.

Platform engineering as a performance dip trap. DORA notes a potential temporary performance decrease during platform implementation before improvements manifest. If leadership expects immediate ROI without a maturation curve, they’ll kill the platform right before it starts helping.

Metric gaming. DORA explicitly warns against setting metrics as goals (Goodhart’s Law) and against comparing different systems. If you pay bonuses for deployment frequency, you’ll get… creative deployment frequency.

Hybrid collaboration debt. Research notes the impact of reduced social interaction and altered communication patterns, and hybrid meeting studies emphasize meeting-intent decisions. If you don’t design hybrid intentionally, you accumulate “misalignment interest” until it becomes a conflict balloon payment.

Compliance becoming the new bottleneck (unless automated). NIST describes DevSecOps as enabling agile and secure development through CI/CD, security testing, and continuous monitoring supported by automation. The DoD cATO approach similarly frames continuous assessment and authorization supported by DevSecOps platforms and continuous monitoring.

Representative mini case studies and examples

AI adoption reality check (cross-industry survey evidence).

DORA’s 2024 highlights show broad daily AI usage and reported productivity benefits, but also an association with decreased delivery throughput and stability and low trust in AI-generated code. The practical lesson: don’t treat AI as a substitute for small-batch delivery and robust testing; treat it as an amplifier that needs guardrails.

Platform engineering in the open: Backstage and developer portals.

CNCF documents Backstage’s incubation path and describes it as a developer portal framework; the project’s documentation positions it as centralizing catalog, templates, and docs-as-code to improve shipping speed without compromising autonomy. This is a concrete example of “platform as product” becoming mainstream practice.

Regulated environments: continuous authorization and DevSecOps.

The DoD cATO implementation guidance frames a move away from point-in-time assessment toward continuous risk determination and authorization, enabled by continuous monitoring, active cyber defense, and approved DevSecOps reference designs. NIST’s DevSecOps guidance emphasizes CI/CD pipelines, security testing, and continuous monitoring supported by automation. This is the direction modern governance is heading: controls that live in the pipeline, not in meetings.

Hybrid meetings in large-scale agile: meeting intent as a design rule.

The Ericsson case study (hybrid work in a large-scale agile environment) reports that meeting effectiveness varies by intent—discussion-heavy sessions benefit from onsite presence, while information sharing works well remotely. This is actionable: stop trying to make every ceremony “hybrid by default” without considering what the meeting is for.

Sources

- Scrum Guides — Scrum Guide (2020 version, HTML)

- Scrum Guides — Scrum Guide revisions and changes (2017 → 2020)

- Scrum.org — Nexus Guide (online version)

- Scrum.org — Kanban Guide for Scrum Teams (flow + metrics)

- Scrum.org — Evidence-Based Management Guide (definition + goal levels)

- Scrum.org — Agile Product Operating Model overview

- DORA (Google Cloud) — 2024 DORA report landing page

- Google Cloud Blog — Highlights from the 10th DORA report (AI + platform engineering findings)

- DORA — Software delivery performance metrics guide (five metrics + pitfalls)

- DORA — History of DORA metrics (shift to five-metric model, 2024)

- Digital.ai — 17th State of Agile Report page (hybrid model stats, 2023 context)

- Digital.ai — 18th State of Agile Report page (AI + hybrid + outcomes framing)

- SAFe (Scaled Agile Framework) — What’s new in SAFe 6.0

- SAFe — Framework landing / Big Picture navigation

- LeSS — Official framework overview (LeSS vs LeSS Huge, one product backlog emphasis)

- CNCF — What is platform engineering? (definition, platform as product, self-service vs ticket ops)

- CNCF — Platform Engineering maturity model announcement

- CNCF — Backstage project page (acceptance/Incubating timeline)

- Backstage documentation — What is Backstage? (catalog/templates/docs-as-code positioning)

- CNCF — Backstage project joins incubator (origin + adoption notes)

- Springer / Empirical Software Engineering — Agile software development one year into COVID-19 (remote work impacts, mixed methods)

- PubMed Central — Working from home and Scrum project success (multi-method, autonomy/relatedness needs)

- Ericsson hybrid meeting case study (ResearchGate-hosted full text)

- Google re:Work — Team effectiveness and psychological safety (Project Aristotle summary)

- Amy Edmondson — Psychological safety definition (1999 paper)

- Google re:Work — OKR guide (writing OKRs, outcomes vs activities, public alignment)

- NIST SP 800-204C — DevSecOps for microservices (CI/CD, security testing, continuous monitoring, automation)

- DoD CIO — DevSecOps cATO implementation guide (continuous authorization framing)

- AI pair programming evidence — controlled experiment (arXiv)

- AI productivity evidence — field experiment (MIT)

- AI productivity evidence — GitHub research blog post

- Value Stream Management definitions — SAFe VSM definition and IBM VSM overview

- Flow Framework community overview (VSM + flow metrics framing)