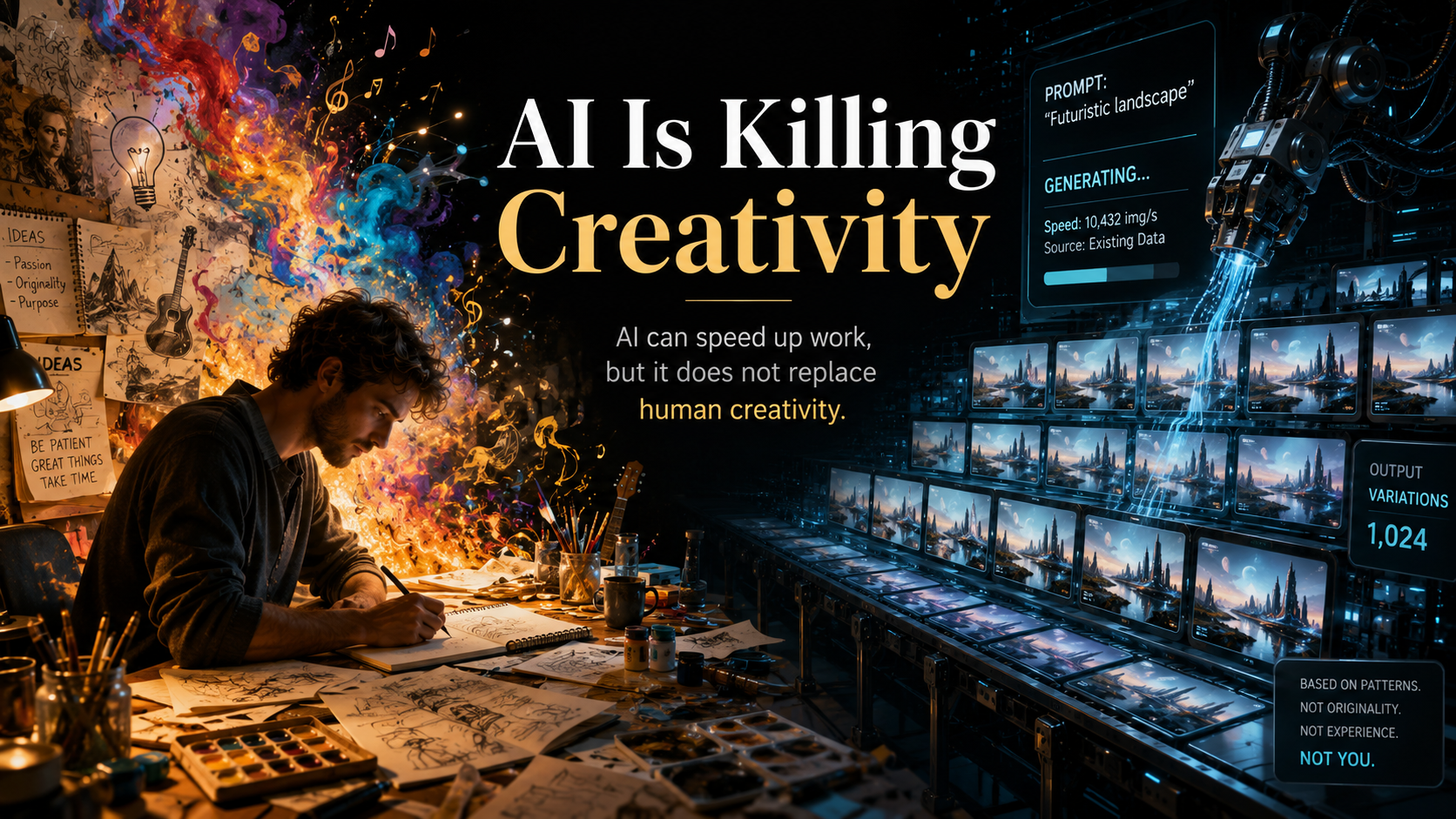

AI Is Killing Creativity

AI can speed up work, but it does not replace human creativity. It is generative, not truly creative, and it builds on patterns from what already exists.

Arkadiusz Kozieł

AI is often sold as a creativity machine, but that’s an oversimplification. In practice, AI is not creative in the human sense. It does not invent from lived experience, curiosity, risk, or intent. It is generative: it recombines patterns learned from existing material and produces outputs that feel new because the mix is new, not because the system understands why something matters.

That difference matters more than many teams realize. A human designer, writer, product owner, or engineer can look at a problem and ask: what is the real issue here, what are we trying to change, and what would be brave rather than obvious? AI usually starts from the opposite place. It predicts the next likely word, image, or code fragment based on what already exists. That makes it useful, fast, and often surprisingly good—but not original in the deeper sense.

The real risk is not that AI destroys creativity overnight. The risk is that people stop practicing it. When every first draft comes from a prompt, teams may skip the uncomfortable part: thinking from scratch, making connections, challenging assumptions, and tolerating weak early ideas long enough for better ones to emerge. Creativity is not the polished result. It is the messy process before the result, and that process is exactly what gets outsourced too easily.

In product and delivery work, this shows up fast. Teams can use AI to generate user stories, meeting notes, design options, test cases, or architecture suggestions. That saves time. But if the team accepts the first plausible answer, it starts to optimize for convenience instead of insight. I’ve seen this in Scrum teams: the backlog looks full, the documentation looks clean, and yet the actual product thinking becomes flatter because no one is digging into the hard questions anymore.

There is also a subtle homogenization effect. Since AI is trained on what already worked, it tends to produce safe, average, familiar outputs. That is fine when you need a draft, but dangerous when you need a point of view. The most valuable ideas in any team usually come from someone noticing what the model would never notice: the awkward edge case, the real user pain, the business constraint nobody wants to name, or the unusual solution that looks odd at first but changes everything.

So the answer is not to reject AI. The answer is to use it for what it is good at: acceleration, exploration, variation, and reducing repetitive work. Then keep the creative responsibility where it belongs—with people. Ask AI for options, not decisions. Ask it for alternatives, not truth. Ask it to widen the search, not close it. The moment you let AI define the problem, you often lose the chance to solve the right one.

Creativity is still a human advantage, but only if we protect it intentionally. Teams should use AI to remove friction, not to remove thinking. If AI becomes a shortcut around judgment, originality will suffer. If it becomes a tool that gives people more time and energy to think deeply, then creativity does not disappear—it gets stronger. The problem is not that AI is creative. The problem is that it can make us forget what creativity actually requires.